Introduction

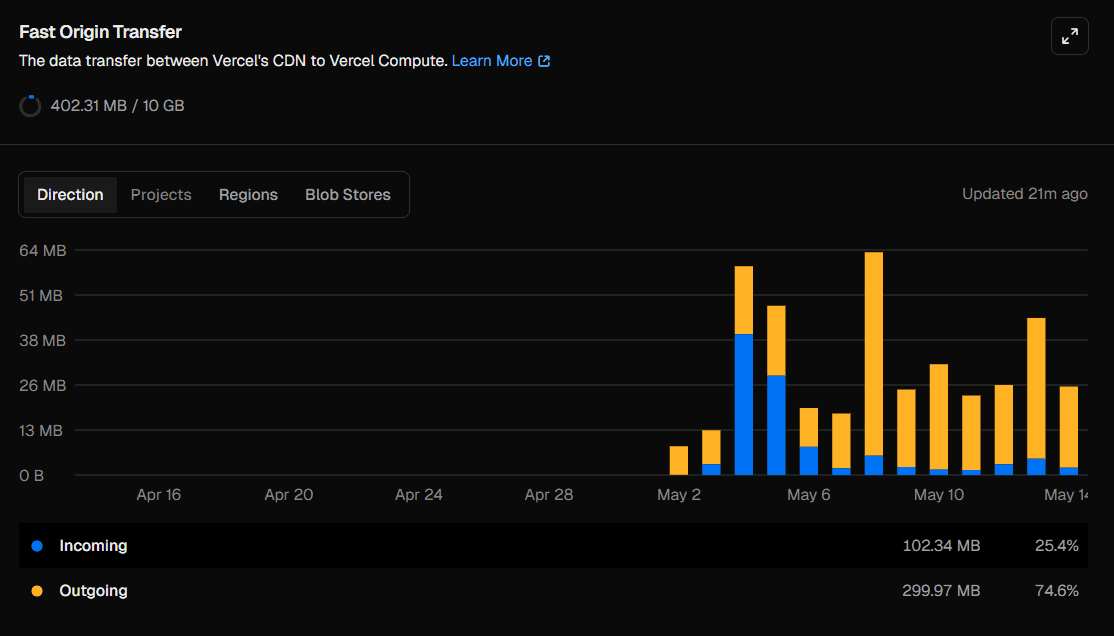

If you've spent any time in the Vercel dashboard, you've probably seen a metric called Fast Origin Transfer sitting under your usage stats. For a long time I ignored it — until I noticed it had climbed to 402 MB and I had no idea why.

In this post I'll explain exactly what Fast Origin Transfer is, what the incoming and outgoing numbers mean, what causes usage to spike, and how to keep your project well within Vercel's 10 GB free tier limit.

What is Fast Origin Transfer?

Fast Origin Transfer is the data transferred between Vercel's CDN edge network and Vercel Compute (your serverless functions, Server Components, and API routes). It is not the bandwidth between Vercel and your end users — that's tracked separately.

Think of it this way:

- A user makes a request to your site.

- Vercel's CDN edge receives it first.

- If the response isn't cached, the edge forwards the request to Vercel Compute (your server).

- Compute generates the response and sends it back to the edge.

- The edge serves it to the user.

The data moving in steps 3 and 4 — between edge and compute — is what Vercel calls Fast Origin Transfer. The "Fast" refers to the fact that this transfer happens over Vercel's internal private network, not the public internet.

Incoming vs Outgoing — what's the difference?

In my dashboard, I could see two numbers:

- Incoming: 102.34 MB (25.4%) — data flowing from the CDN edge into Vercel Compute. This is request payloads — POST bodies, file uploads, form data sent from your users that needs to reach your server functions.

- Outgoing: 299.97 MB (74.6%) — data flowing from Vercel Compute out to the CDN edge. This is your server's responses — HTML pages, JSON from API routes, images served by your server, Server Component payloads.

The 74.6% outgoing split is completely normal. Your server almost always sends more data than it receives — you send full HTML pages and JSON responses in exchange for small request payloads.

Reading the chart

Looking at my usage chart, a few things stood out:

- Zero usage before May 2 — my site had very little traffic in mid-to-late April.

- Sharp spike on May 4-5 — the tallest bars hit nearly 64 MB in a single day. This matched a day I shared a project on social media and got a surge of visitors.

- Consistent daily usage from May 6 onward — 20-30 MB per day of mostly outgoing (yellow) transfer, which tells me my server-rendered pages are being requested regularly but my CDN cache hit rate could be better.

The blue (incoming) bars are tiny compared to yellow (outgoing) — which confirms most of my routes are GET requests (page loads, API reads), not POST requests with large bodies.

What drives Fast Origin Transfer usage?

Here are the most common causes:

- Server-side rendered pages (SSR) — every uncached SSR request generates a round-trip between the edge and compute. More SSR = more transfer.

- API routes — every call to your

/api/*endpoints passes through the edge to compute and back. - Large API responses — if your API returns big JSON payloads (e.g. returning an entire database table), that's a lot of outgoing transfer per request.

- File uploads — if users upload images or documents through your Next.js API routes, those bytes count as incoming transfer.

- Low cache hit rate — pages that could be statically cached but aren't will generate unnecessary transfer on every visit.

How to reduce Fast Origin Transfer usage

If you're approaching the 10 GB limit (or just want to optimise), here's what I recommend:

1. Cache aggressively with static generation

Pages that don't need real-time data should be statically generated. A cached static page served from the edge never touches Vercel Compute — zero transfer.

// Next.js — force static generation

export const dynamic = "force-static";

// Or use generateStaticParams for dynamic routes

export async function generateStaticParams() {

const posts = await getPosts();

return posts.map((post) => ({ slug: post.slug }));

}2. Use Incremental Static Regeneration (ISR)

For pages that need to update but not on every request, ISR regenerates the page in the background and caches it at the edge. Only one compute request per revalidation window, not one per visitor.

// Revalidate every 60 seconds

export const revalidate = 60;

export default async function BlogPost({ params }) {

const post = await getPost(params.slug);

return ;

}3. Add Cache-Control headers to API routes

export async function GET() {

const data = await fetchPublicData();

return Response.json(data, {

headers: {

"Cache-Control": "public, s-maxage=300, stale-while-revalidate=600",

},

});

}This tells Vercel's edge to cache the API response for 5 minutes and serve stale data for up to 10 minutes while revalidating. Your compute function runs once every 5 minutes instead of once per request.

4. Paginate large API responses

Instead of returning 1000 items in one response, paginate to 20-50 items per page. Smaller responses = less outgoing transfer per request.

// Bad — returns everything

const allPosts = await db.post.findMany();

// Good — paginated

const posts = await db.post.findMany({

take: 20,

skip: (page - 1) * 20,

});5. Use Vercel Blob for file uploads

Instead of routing file uploads through your API routes (which burns incoming Fast Origin Transfer), upload directly to Vercel Blob from the client. The file goes straight to Blob storage, bypassing your compute function entirely.

import { put } from "@vercel/blob";

// Client uploads directly to Blob — no compute transfer

const blob = await put(filename, file, { access: "public" });What's the free tier limit?

On Vercel's Hobby plan, you get 10 GB of Fast Origin Transfer per month for free. My 402 MB puts me at about 4% of the limit — well within the free tier. But if you're running a high-traffic site with many SSR pages and large API responses, it's worth monitoring this metric monthly.

On the Pro plan, the limit increases significantly and overages are billed per GB rather than blocking your deployments.

Conclusion

Fast Origin Transfer is simply the internal bandwidth between Vercel's CDN edge and your serverless compute. High outgoing transfer (like my 74.6%) is normal — it just means your server is generating responses. To keep usage low, lean on static generation, ISR, response caching, and direct-to-Blob uploads wherever possible.

For most hobby and small production projects, the 10 GB free limit is more than enough. But now that you know what drives it, you're in a much better position to optimise if you ever need to.